1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

|

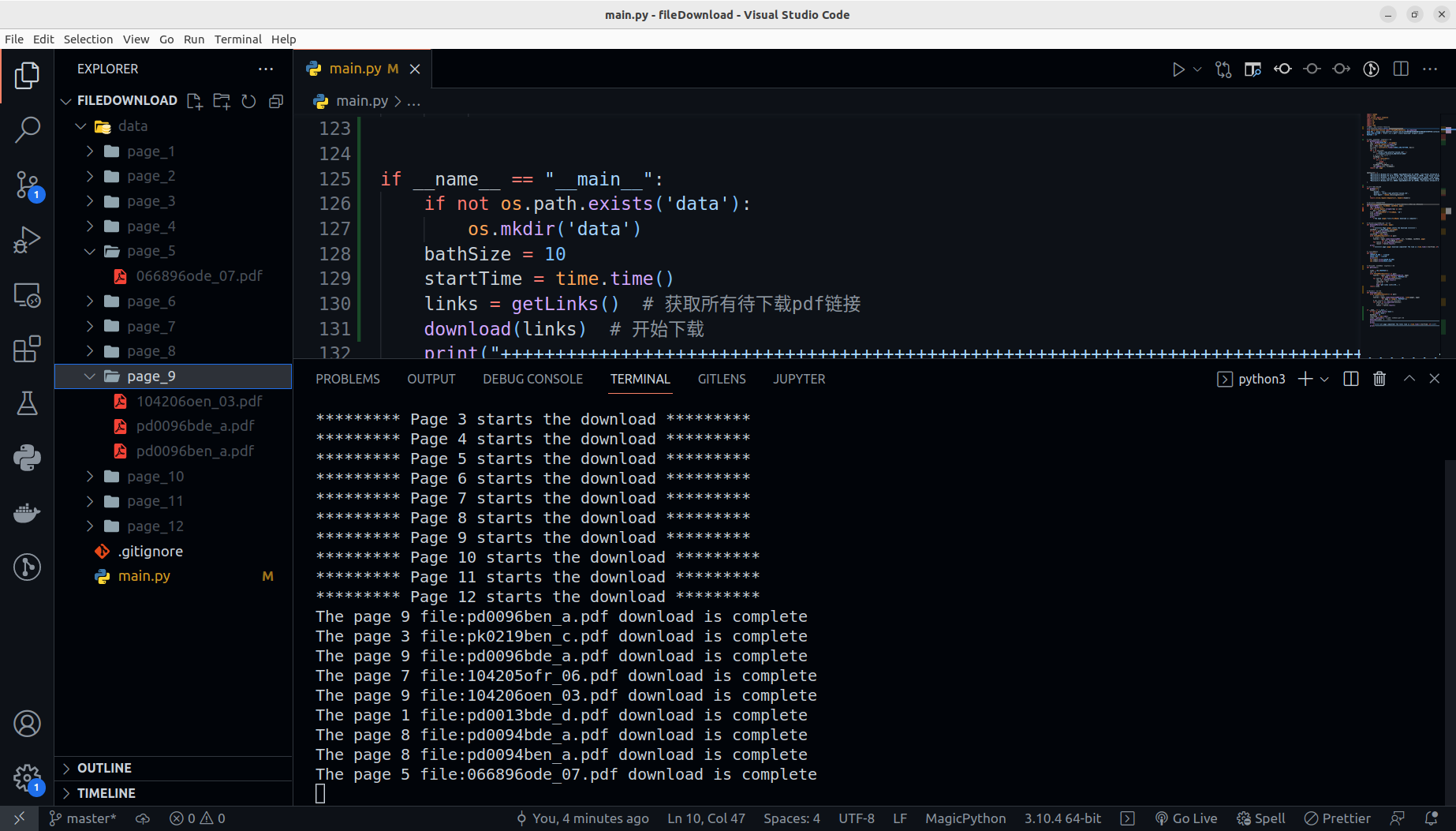

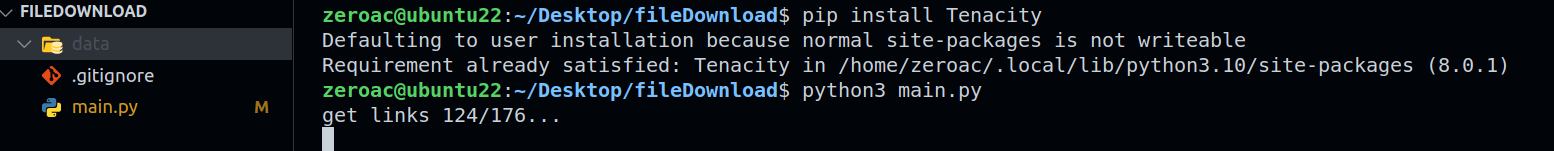

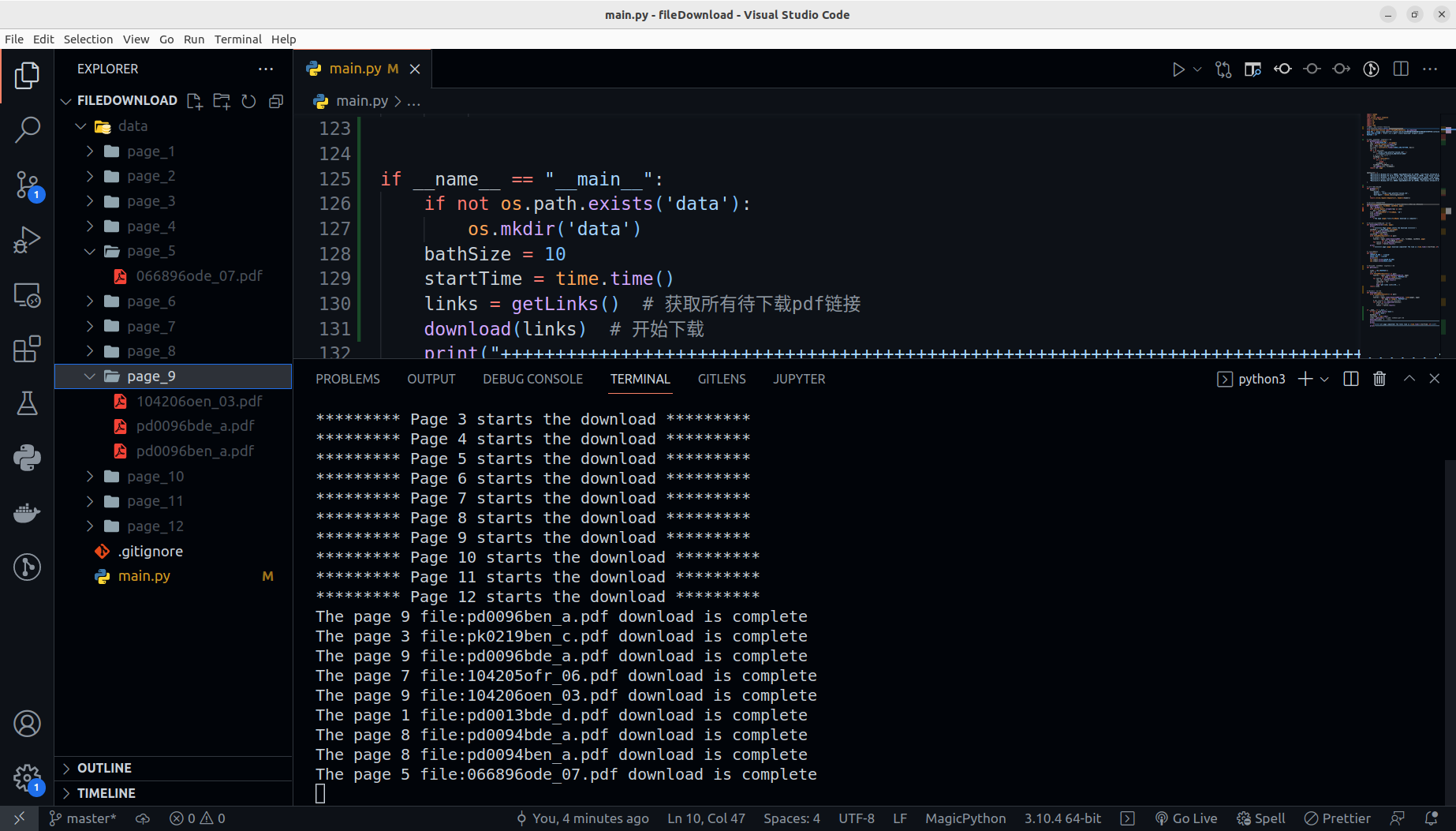

import random

import time

from urllib import response

import urllib.request

import os

import sys

import re

import time

from tenacity import retry, stop_after_attempt

from concurrent.futures import ThreadPoolExecutor, as_completed

BASE_URL = 'https://www.pfeiffer-vacuum.com/zh/%E4%B8%8B%E8%BD%BD%E4%B8%AD%E5%BF%83/container.action?searchQuery=&categoryChoice=15&categoryChoice=%E6%93%8D%E4%BD%9C%E6%89%8B%E5%86%8C&categoryChoice=25&categoryChoice=19&categoryChoice=23&categoryChoice=%E7%BB%B4%E6%8A%A4%E8%AF%B4%E6%98%8E&categoryChoice=%E5%AE%A3%E4%BC%A0%E5%86%8C&categoryChoice=60&categoryChoice=56&categoryChoice=50&categoryChoice=9&categoryChoice=4&categoryChoice=12&categoryChoice=1&categoryChoice=27&categoryChoice=3&categoryChoice=7&categoryChoice=2&categoryChoice=8&categoryChoice=62&categoryChoice=57&categoryChoice=5&categoryChoice=11&categoryChoice=13&categoryChoice=10&categoryChoice=14&categoryChoice=54&categoryChoice=55&categoryChoice=34&categoryChoice=36&categoryChoice=33&categoryChoice=35&categoryChoice=41&categoryChoice=42&categoryChoice=48&categoryChoice=39&categoryChoice=40&categoryChoice=43&categoryChoice=45&categoryChoice=61&categoryChoice=49&categoryChoice=37&search=true&page='

BOOK_LINK_PATTERN = 'href=".*=(.*.pdf)" class="download" target="_blank"'

MAXPAGE = 177

def getDownLoadLink(page):

req = getReq(BASE_URL + str(page))

html = urllib.request.urlopen(req)

doc = html.read().decode('utf8')

url_list = list(set(re.findall(BOOK_LINK_PATTERN, doc)))

ret = []

for v in url_list:

url = "https://www.pfeiffer-vacuum.com" + \

v+"?request_locale=zh_CN&referer=2063"

fileName = ""

for i in reversed(v):

if i == '/':

break

fileName += i

fileName = fileName[::-1]

ret.append((url, fileName))

return ret, page

agnetsList = [

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.71 Safari/537.36",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36",

"Mozilla/5.0 (Windows; U; Windows NT 6.1; en-US) AppleWebKit/534.16 (KHTML, like Gecko) Chrome/10.0.648.133 Safari/534.16",

"Mozilla/5.0 (Linux; Android 4.0.4; Galaxy Nexus Build/IMM76B) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.133 Mobile Safari/535.19",

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.94 Safari/537.36"

]

def getReq(url):

headers = {

'Accept': '*/*',

'Referer': 'https://www.pfeiffer-vacuum.com',

'User-Agent': random.choice(agnetsList)

}

return urllib.request.Request(url, headers=headers)

@retry(stop=stop_after_attempt(6))

def downloadPdf(url, fileName, savePath, page):

req = getReq(url)

with urllib.request.urlopen(req) as conn:

buf = conn.read()

file = open(savePath+"/"+fileName, 'wb')

file.write(buf)

file.close()

print(

f'The page {page} file:{fileName} download is complete')

def downloadParallel(links, page):

print(

f'********* Page {page} starts the download *********')

savePath = './data/page_'+str(page)

if not os.path.exists(savePath):

os.mkdir(savePath)

startTime = time.time()

with ThreadPoolExecutor() as pool:

futures = [pool.submit(downloadPdf, url, fileName, savePath, page)

for url, fileName in links]

for future in as_completed(futures):

result = future.result()

print(

f'********* page {page} download completed! The time is {time.time()-startTime:.2f} s *********')

def msg(txt):

CURSOR_UP_ONE = '\x1b[1A'

ERASE_LINE = '\x1b[2K'

print(txt)

sys.stdout.write(CURSOR_UP_ONE)

sys.stdout.write(ERASE_LINE)

def getLinks():

links = [0]*(MAXPAGE+1)

cnt = 0

with ThreadPoolExecutor() as pool:

futures = [pool.submit(getDownLoadLink, page)

for page in range(1, MAXPAGE+1)]

for future in as_completed(futures):

res, id = future.result()

links[id] = res

cnt += 1

msg(f'get links {cnt}/176...')

return links

def download(links):

with ThreadPoolExecutor() as pool:

futures = [pool.submit(downloadParallel, links[page], page)

for page in range(1, MAXPAGE+1)]

for future in as_completed(futures):

result = future.result()

if __name__ == "__main__":

if not os.path.exists('data'):

os.mkdir('data')

bathSize = 10

startTime = time.time()

links = getLinks()

download(links)

print("++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++\n")

print(

f"\t\t\t all page completed! The total time is {time.time()-startTime:.2f} s\n")

print("++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++\n")

|